欢迎加入王导的VIP学习qq群:==>932194668<==

实验环境

基础架构

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-11.host.com | k8s代理节点1 | 10.4.7.11 |

| HDSS7-12.host.com | k8s代理节点2 | 10.4.7.12 |

| HDSS7-21.host.com | k8s运算节点1 | 10.4.7.21 |

| HDSS7-22.host.com | k8s运算节点2 | 10.4.7.22 |

| HDSS7-200.host.com | k8s运维节点(docker仓库) | 10.4.7.200 |

硬件环境

- 5台vm,每台至少2c2g

软件环境

- OS: CentOS Linux release 7.6.1810 (Core)

- docker: v1.12.6

- kubernetes: v1.13.2

- etcd: v3.1.18

- flannel: v0.10.0

- bind9: v9.9.4

- harbor: v1.7.1

- 证书签发工具CFSSL: R1.2

- 其他

其他可能用到的软件,均使用操作系统自带的yum源和epel源进行安装

前置准备工作

DNS服务安装部署

- 创建主机域host.com

- 创建业务域od.com

- 主辅同步(10.4.7.11主、10.4.7.12辅)

- 客户端配置指向自建DNS

略

准备签发证书环境

运维主机HDSS7-200.host.com上:

安装CFSSL

- 证书签发工具CFSSL: R1.2

1 | [root@hdss7-200 ~]# curl -s -L -o /usr/bin/cfssl https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 |

创建生成CA证书的JSON配置文件

1 | { |

证书类型

client certificate: 客户端使用,用于服务端认证客户端,例如etcdctl、etcd proxy、fleetctl、docker客户端

server certificate: 服务端使用,客户端以此验证服务端身份,例如docker服务端、kube-apiserver

peer certificate: 双向证书,用于etcd集群成员间通信

创建生成CA证书签名请求(csr)的JSON配置文件

1 | { |

CN: Common Name,浏览器使用该字段验证网站是否合法,一般写的是域名。非常重要。浏览器使用该字段验证网站是否合法

C: Country, 国家

ST: State,州,省

L: Locality,地区,城市

O: Organization Name,组织名称,公司名称

OU: Organization Unit Name,组织单位名称,公司部门

生成CA证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca - |

生成ca.pem、ca.csr、ca-key.pem(CA私钥,需妥善保管)

1 | [root@hdss7-200 certs]# ls -l |

部署docker环境

HDSS7-200.host.com,HDSS7-21.host.com,HDSS7-22.host.com上:

安装

- docker: v1.12.6

1 | # ls -l|grep docker-engine |

配置

1 | # vi /etc/docker/daemon.json |

注意:这里bip要根据宿主机ip变化

启动脚本

1 | [Unit] |

启动

1 | # systemctl enable docker.service |

部署docker镜像私有仓库harbor

HDSS7-200.host.com上:

下载软件二进制包并解压

1 | [root@hdss7-200 harbor]# tar xf harbor-offline-installer-v1.7.1.tgz -C /opt |

配置

1 | hostname = harbor.od.com |

1 | ports: |

安装docker-compose

1 | [root@hdss7-200 harbor]# yum install docker-compose -y |

安装harbor

1 | [root@hdss7-200 harbor]# ./install.sh |

检查harbor启动情况

1 | [root@hdss7-200 harbor]# docker-compose ps |

配置harbor的dns内网解析

1 | harbor 60 IN A 10.4.7.200 |

检查

1 | [root@hdss7-200 harbor]# dig -t A harbor.od.com @10.4.7.11 +short |

安装nginx并配置

安装

1 | [root@hdss7-200 harbor]# yum install nginx -y |

配置

1 | server { |

注意:这里需要自签ssl证书,自签过程略

(umask 077; openssl genrsa -out od.key 2048)

openssl req -new -key od.key -out od.csr -subj “/CN=*.od.com/ST=Beijing/L=beijing/O=od/OU=ops”

openssl x509 -req -in od.csr -CA ca.crt -CAkey ca.key -CAcreateserial -out od.crt -days 365

启动

1 | [root@hdss7-200 harbor]# nginx |

浏览器打开http://harbor.od.com

- 用户名:admin

- 密码: Harbor12345

部署Master节点服务

部署etcd集群

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-12.host.com | etcd lead | 10.4.7.12 |

| HDSS7-21.host.com | etcd follow | 10.4.7.21 |

| HDSS7-22.host.com | etcd follow | 10.4.7.22 |

注意:这里部署文档以HDSS7-12.host.com主机为例,另外两台主机安装部署方法类似

创建生成证书签名请求(csr)的JSON配置文件

运维主机HDSS7-200.host.com上:

1 | { |

生成etcd证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer etcd-peer-csr.json | cfssljson -bare etcd-peer |

检查生成的证书、私钥

1 | [root@hdss7-200 certs]# ls -l|grep etcd |

创建etcd用户

HDSS7-12.host.com上:

1 | [root@hdss7-12 ~]# useradd -s /sbin/nologin -M etcd |

下载软件,解压,做软连接

etcd下载地址HDSS7-12.host.com上:

1 | [root@hdss7-12 src]# ls -l |

创建目录,拷贝证书、私钥

HDSS7-12.host.com上:

1 | [root@hdss7-12 src]# mkdir -p /data/etcd /data/logs/etcd-server |

将运维主机上生成的ca.pem、etcd-peer-key.pem、etcd-peer.pem拷贝到/opt/etcd/certs目录中,注意私钥文件权限600

1 | [root@hdss7-12 certs]# chmod 600 etcd-peer-key.pem |

创建etcd服务启动脚本

HDSS7-12.host.com上:

1 | #!/bin/sh |

注意:etcd集群各主机的启动脚本略有不同,部署其他节点时注意修改。

调整权限和目录

HDSS7-12.host.com上:

1 | [root@hdss7-12 certs]# chmod +x /opt/etcd/etcd-server-startup.sh |

安装supervisor软件

HDSS7-12.host.com上:

1 | [root@hdss7-12 certs]# yum install supervisor -y |

创建etcd-server的启动配置

HDSS7-12.host.com上:

1 | [program:etcd-server-7-12] |

注意:etcd集群各主机启动配置略有不同,配置其他节点时注意修改。

启动etcd服务并检查

HDSS7-12.host.com上:

1 | [root@hdss7-12 certs]# supervisorctl start all |

安装部署启动检查所有集群规划主机上的etcd服务

略

检查集群状态

3台均启动后,检查集群状态

1 | [root@hdss7-12 ~]# /opt/etcd/etcdctl cluster-health |

部署kube-apiserver集群

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | kube-apiserver | 10.4.7.21 |

| HDSS7-22.host.com | kube-apiserver | 10.4.7.22 |

| HDSS7-11.host.com | 4层负载均衡 | 10.4.7.11 |

| HDSS7-12.host.com | 4层负载均衡 | 10.4.7.12 |

注意:这里10.4.7.11和10.4.7.12使用nginx做4层负载均衡器,用keepalived跑一个vip:10.4.7.10,代理两个kube-apiserver,实现高可用 |

这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

下载软件,解压,做软连接

HDSS7-21.host.com上:

kubernetes下载地址

1 | [root@hdss7-21 src]# ls -l|grep kubernetes |

签发client证书

运维主机HDSS7-200.host.com上:

创建生成证书签名请求(csr)的JSON配置文件

1 | { |

生成client证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client-csr.json | cfssljson -bare client |

检查生成的证书、私钥

1 | [root@hdss7-200 certs]# ls -l|grep client |

签发kube-apiserver证书

运维主机HDSS7-200.host.com上:

创建生成证书签名请求(csr)的JSON配置文件

1 | { |

生成kube-apiserver证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server apiserver-csr.json | cfssljson -bare apiserver |

检查生成的证书、私钥

1 | [root@hdss7-200 certs]# ls -l|grep apiserver |

拷贝证书至各运算节点,并创建配置

HDSS7-21.host.com上:

拷贝证书、私钥,注意私钥文件属性600

1 | [root@hdss7-21 cert]# ls -l /opt/kubernetes/server/bin/cert |

创建配置

1 | apiVersion: audit.k8s.io/v1beta1 # This is required. |

创建启动脚本

HDSS7-21.host.com上:

1 | #!/bin/bash |

调整权限和目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# chmod +x /opt/kubernetes/server/bin/kube-apiserver.sh |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:kube-apiserver] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# supervisorctl update |

安装部署启动检查所有集群规划主机上的kube-apiserver

略

配4层反向代理

HDSS7-11.host.com,HDSS7-12.host.com上:

nginx配置

1 | stream { |

keepalived配置

check_port.sh

1 | #!/bin/bash |

keepalived主

HDSS7-11.host.com上

1 | [root@hdss7-11 ~]# rpm -qa keepalived |

1 | ! Configuration File for keepalived |

keepalived备

HDSS7-12.host.com上

1 | [root@hdss7-12 ~]# rpm -qa keepalived |

1 | ! Configuration File for keepalived |

启动代理并检查

HDSS7-11.host.com,HDSS7-12.host.com上:

启动

1

2

3

4

5

6

7[root@hdss7-11 ~]# systemctl start keepalived

[root@hdss7-11 ~]# systemctl enable keepalived

[root@hdss7-11 ~]# nginx -s reload

[root@hdss7-12 ~]# systemctl start keepalived

[root@hdss7-12 ~]# systemctl enable keepalived

[root@hdss7-12 ~]# nginx -s reload检查

1

2

3

4

5

6

7

8[root@hdss7-11 ~]## netstat -luntp|grep 7443

tcp 0 0 0.0.0.0:7443 0.0.0.0:* LISTEN 17970/nginx: master

[root@hdss7-12 ~]## netstat -luntp|grep 7443

tcp 0 0 0.0.0.0:7443 0.0.0.0:* LISTEN 17970/nginx: master

[root@hdss7-11 ~]# ip add|grep 10.4.9.10

inet 10.9.7.10/32 scope global vir0

[root@hdss7-11 ~]# ip add|grep 10.4.9.10

(空)

部署controller-manager

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | controller-manager | 10.4.7.21 |

| HDSS7-22.host.com | controller-manager | 10.4.7.22 |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

创建启动脚本

HDSS7-21.host.com上:

1 | #!/bin/sh |

调整文件权限,创建目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# chmod +x /opt/kubernetes/server/bin/kube-controller-manager.sh |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:kube-controller-manager] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# supervisorctl update |

安装部署启动检查所有集群规划主机上的kube-controller-manager服务

略

部署kube-scheduler

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | kube-scheduler | 10.4.7.21 |

| HDSS7-22.host.com | kube-scheduler | 10.4.7.22 |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

创建启动脚本

HDSS7-21.host.com上:

1 | #!/bin/sh |

调整文件权限,创建目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# chmod +x /opt/kubernetes/server/bin/kube-scheduler.sh |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:kube-scheduler] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# supervisorctl update |

安装部署启动检查所有集群规划主机上的kube-scheduler服务

略

部署Node节点服务

部署kubelet

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | kubelet | 10.4.7.21 |

| HDSS7-22.host.com | kubelet | 10.4.7.22 |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

签发kubelet证书

运维主机HDSS7-200.host.com上:

创建生成证书签名请求(csr)的JSON配置文件

1 | { |

生成kubelet证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server kubelet-csr.json | cfssljson -bare kubelet |

检查生成的证书、私钥

1 | [root@hdss7-200 certs]# ls -l|grep kubelet |

拷贝证书至各运算节点,并创建配置

HDSS7-21.host.com上:

拷贝证书、私钥,注意私钥文件属性600

1 | [root@hdss7-21 cert]# ls -l /opt/kubernetes/server/bin/cert |

创建配置

HDSS7-21.host.com上:

给kubectl创建软连接

1 | [root@hdss7-21 bin]# ln -s /opt/kubernetes/server/bin/kubectl /usr/bin/kubectl |

set-cluster

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-cluster myk8s \ |

set-credentials

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-credentials k8s-node --client-certificate=/opt/kubernetes/server/bin/cert/client.pem --client-key=/opt/kubernetes/server/bin/cert/client-key.pem --embed-certs=true --kubeconfig=kubelet.kubeconfig |

set-context

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-context myk8s-context \ |

use-context

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kubelet.kubeconfig |

k8s-node.yaml

- 创建资源配置文件

1 | apiVersion: rbac.authorization.k8s.io/v1 |

- 应用资源配置文件

1 | [root@hdss7-21 conf]# kubectl create -f k8s-node.yaml |

- 检查

1 | [root@hdss7-21 conf]# kubectl get clusterrolebinding k8s-node |

准备infra_pod基础镜像

运维主机HDSS7-200.host.com上:

下载

1 | [root@hdss7-200 ~]# docker pull xplenty/rhel7-pod-infrastructure:v3.4 |

提交至私有仓库(harbor)中

- 配置主机登录私有仓库

1 | { |

这里代表:用户名admin,密码Harbor12345

[root@hdss7-200 ~]# echo YWRtaW46SGFyYm9yMTIzNDU=|base64 -d

admin:Harbor12345

注意:也可以在各运算节点使用docker login harbor.od.com,输入用户名,密码

- 给镜像打tag

1 | [root@hdss7-200 ~]# docker images|grep v3.4 |

- push到harbor

1 | [root@hdss7-200 ~]# docker push harbor.od.com/k8s/pod:v3.4 |

创建kubelet启动脚本

HDSS7-21.host.com上:

1 | #!/bin/sh |

注意:kubelet集群各主机的启动脚本略有不同,部署其他节点时注意修改。

检查配置,权限,创建日志目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 conf]# ls -l|grep kubelet.kubeconfig |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:kube-kubelet] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# supervisorctl update |

检查运算节点

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# kubectl get node |

非常重要!

安装部署启动检查所有集群规划主机上的kubelet服务

略

部署kube-proxy

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | kube-proxy | 10.4.7.21 |

| HDSS7-22.host.com | kube-proxy | 10.4.7.22 |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

签发kube-proxy证书

运维主机HDSS7-200.host.com上:

创建生成证书签名请求(csr)的JSON配置文件

1 | { |

生成kube-proxy证书和私钥

1 | [root@hdss7-200 certs]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client kube-proxy-csr.json | cfssljson -bare kube-proxy-client |

检查生成的证书、私钥

1 | [root@hdss7-200 certs]# ls -l|grep kube-proxy |

拷贝证书至各运算节点,并创建配置

HDSS7-21.host.com上:

拷贝证书、私钥,注意私钥文件属性600

1 | [root@hdss7-21 cert]# ls -l /opt/kubernetes/server/bin/cert |

创建配置

set-cluster

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-cluster myk8s \ |

set-credentials

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-credentials kube-proxy \ |

set-context

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config set-context myk8s-context \ |

use-context

注意:在conf目录下

1 | [root@hdss7-21 conf]# kubectl config use-context myk8s-context --kubeconfig=kube-proxy.kubeconfig |

创建kube-proxy启动脚本

HDSS7-21.host.com上:

1 | #!/bin/sh |

注意:kube-proxy集群各主机的启动脚本略有不同,部署其他节点时注意修改。

检查配置,权限,创建日志目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 conf]# ls -l|grep kube-proxy.kubeconfig |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:kube-proxy] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 bin]# supervisorctl update |

安装部署启动检查所有集群规划主机上的kube-proxy服务

略

部署addons插件

验证kubernetes集群

在任意一个运算节点,创建一个资源配置清单

这里我们选择HDSS7-21.host.com主机

1 | apiVersion: v1 |

应用资源配置,并检查

1 | [root@hdss7-21 ~]# kubectl create -f nginx-ds.yaml |

验证

补

部署flannel

集群规划

| 主机名 | 角色 | ip |

|---|---|---|

| HDSS7-21.host.com | flannel | 10.4.7.21 |

| HDSS7-22.host.com | flannel | 10.4.7.22 |

注意:这里部署文档以HDSS7-21.host.com主机为例,另外一台运算节点安装部署方法类似

在各运算节点上增加iptables规则

注意:iptables规则各主机的略有不同,其他运算节点上执行时注意修改。

- 优化SNAT规则,各运算节点之间的各POD之间的网络通信不再出网

1 | # iptables -t nat -D POSTROUTING -s 172.7.21.0/24 ! -o docker0 -j MASQUERADE |

10.4.7.21主机上的,来源是172.7.21.0/24段的docker的ip,目标ip不是172.7.0.0/16段,网络发包不从docker0桥设备出站的,才进行SNAT转换

各运算节点保存iptables规则

1 | [root@hdss7-21 ~]# iptables-save > /etc/sysconfig/iptables |

下载软件,解压,做软连接

HDSS7-21.host.com上:

1 | [root@hdss7-21 src]# ls -l|grep flannel |

最终目录结构

1 | [root@hdss7-21 opt]# tree -L 2 |

操作etcd,增加host-gw

HDSS7-21.host.com上:

1 | [root@hdss7-21 etcd]# ./etcdctl set /coreos.com/network/config '{"Network": "172.7.0.0/16", "Backend": {"Type": "host-gw"}}' |

创建配置

HDSS7-21.host.com上:

1 | FLANNEL_NETWORK=172.7.0.0/16 |

注意:flannel集群各主机的配置略有不同,部署其他节点时注意修改。

创建启动脚本

HDSS7-21.host.com上:

1 | #!/bin/sh |

注意:flannel集群各主机的启动脚本略有不同,部署其他节点时注意修改。

检查配置,权限,创建日志目录

HDSS7-21.host.com上:

1 | [root@hdss7-21 flannel]# chmod +x /opt/flannel/flanneld.sh |

创建supervisor配置

HDSS7-21.host.com上:

1 | [program:flanneld] |

启动服务并检查

HDSS7-21.host.com上:

1 | [root@hdss7-21 flanneld]# supervisorctl update |

安装部署启动检查所有集群规划主机上的flannel服务

略

再次验证集群

部署k8s资源配置清单的内网http服务

在运维主机HDSS7-200.host.com上,配置一个nginx虚拟主机,用以提供k8s统一的资源配置清单访问入口

1 | server { |

配置内网DNS解析

HDSS7-11.host.com上

1 | k8s-yaml 60 IN A 10.4.7.200 |

以后所有的资源配置清单统一放置在运维主机的/data/k8s-yaml目录下即可

1 | [root@hdss7-200 ~]# nginx -s reload |

部署kube-dns(coredns)

准备coredns-v1.3.1镜像

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# docker pull coredns/coredns:1.3.1 |

任意一台运算节点上:

1 | [root@hdss7-21 ~]# kubectl create secret docker-registry harbor --docker-server=harbor.od.com --docker-username=admin --docker-password=Harbor12345 --docker-email=stanley.wang.m@qq.com -n kube-system |

准备资源配置清单

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# mkdir -p /data/k8s-yaml/coredns && cd /data/k8s-yaml/coredns |

vi /data/k8s-yaml/coredns/rbac.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/coredns/configmap.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/coredns/deployment.yaml

1 | apiVersion: extensions/v1beta1 |

vi /data/k8s-yaml/coredns/svc.yaml

1 | apiVersion: v1 |

依次执行创建

浏览器打开:http://k8s-yaml.od.com/coredns 检查资源配置清单文件是否正确创建

在任意运算节点上应用资源配置清单

1 | [root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/coredns/rbac.yaml |

检查

1 | [root@hdss7-21 ~]# kubectl get pods -n kube-system -o wide |

部署traefik(ingress)

准备traefik镜像

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# docker pull traefik:v1.7-alpine |

准备资源配置清单

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# mkdir -p /data/k8s-yaml/traefik && cd /data/k8s-yaml/traefik |

vi /data/k8s-yaml/traefik/rbac.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/traefik/daemonset.yaml

1 | apiVersion: extensions/v1beta1 |

vi /data/k8s-yaml/traefik/svc.yaml

1 | kind: Service |

vi /data/k8s-yaml/traefik/ingress.yaml

1 | apiVersion: extensions/v1beta1 |

解析域名

HDSS7-11.host.com上

1 | traefik 60 IN A 10.4.7.10 |

依次执行创建

浏览器打开:http://k8s-yaml.od.com/traefik 检查资源配置清单文件是否正确创建

在任意运算节点应用资源配置清单

1 | [root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/traefik/rbac.yaml |

配置反代

HDSS7-11.host.com和HDSS7-12.host.com两台主机上的nginx均需要配置,这里可以考虑使用saltstack或者ansible进行统一配置管理

1 | upstream default_backend_traefik { |

浏览器访问

部署dashboard

准备dashboard镜像

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# docker pull k8scn/kubernetes-dashboard-amd64:v1.8.3 |

准备资源配置清单

运维主机HDSS7-200.host.com上:

1 | [root@hdss7-200 ~]# mkdir -p /data/k8s-yaml/dashboard && cd /data/k8s-yaml/dashboard |

vi /data/k8s-yaml/dashboard/rbac.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/dashboard/secret.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/dashboard/configmap.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/dashboard/svc.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/dashboard/ingress.yaml

1 | apiVersion: extensions/v1beta1 |

vi /data/k8s-yaml/dashboard/deployment.yaml

1 | apiVersion: apps/v1 |

解析域名

HDSS7-11.host.com上

1 | dashboard 60 IN A 10.4.7.10 |

依次执行创建

浏览器打开:http://k8s-yaml.od.com/dashboard 检查资源配置清单文件是否正确创建

在任意运算节点应用资源配置清单

1 | [root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/dashboard/rbac.yaml |

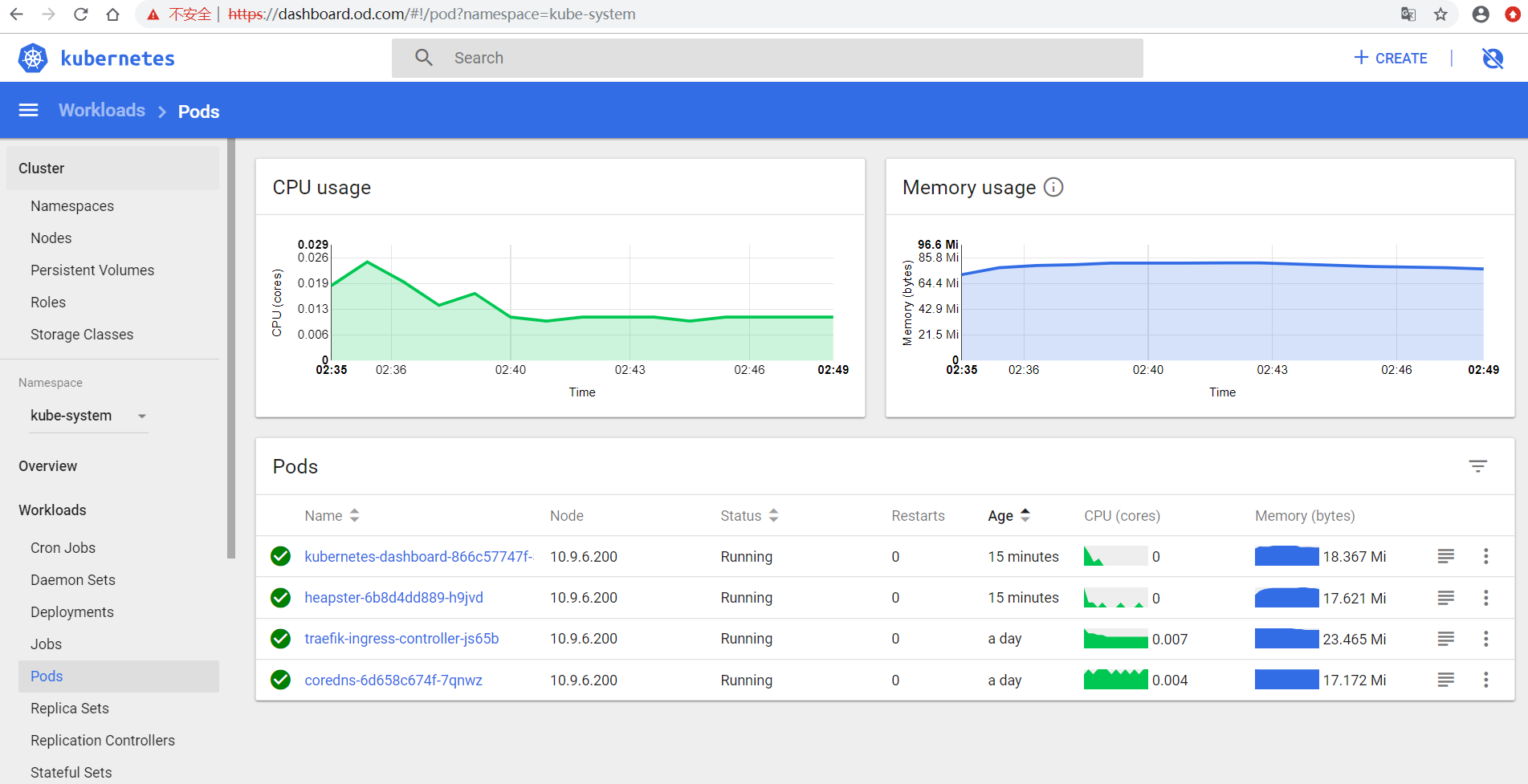

浏览器访问

配置认证

- 下载新版dashboard

1 | [root@hdss7-200 ~]# docker pull hexun/kubernetes-dashboard-amd64:v1.10.1 |

应用新版dashboard

修改nginx配置,走https

1 | server { |

- 获取token

1 | [root@hdsss7-21 ~]# kubectl describe secret kubernetes-dashboard-admin-token-rhr62 -n kube-system |

部署heapster

准备heapster镜像

运维主机HDSS7-200.host.com上

1 | [root@hdss7-200 ~]# docker pull quay.io/bitnami/heapster:1.5.4 |

准备资源配置清单

vi /data/k8s-yaml/dashboard/heapster/rbac.yaml

1 | apiVersion: v1 |

vi /data/k8s-yaml/dashboard/heapster/deployment.yaml

1 | apiVersion: extensions/v1beta1 |

vi /data/k8s-yaml/dashboard/heapster/svc.yaml

1 | apiVersion: v1 |

应用资源配置清单

任意运算节点上:

1 | [root@hdss7-21 ~]# kubectl apply -f http://k8s-yaml.od.com/dashboard/heapster/rbac.yaml |

重启dashboard

浏览器访问:http://dashboard.od.com

排错专用命令

1 | for j in `kubectl get ns|sed '1d'|awk '{print $1}'`;do for i in `kubectl get pods -n $j|grep -iv running|sed '1d'|awk '{print $1}'`;do kubectl delete pods $i -n $j --force --grace-period=0;done;done |